OpenSpecimen supports importing CSV files to bulk upload data. Using this feature, you can add, update or delete data. This can be used for legacy data migration, integration with other instruments or databases, and adding/editing data in bulk.

From V9.1 strict date parsing has been implemented. To use the date formats with single digit date or month formats, the format M/d/yyyy can be used. To create new date formats, refer to the wiki page. |

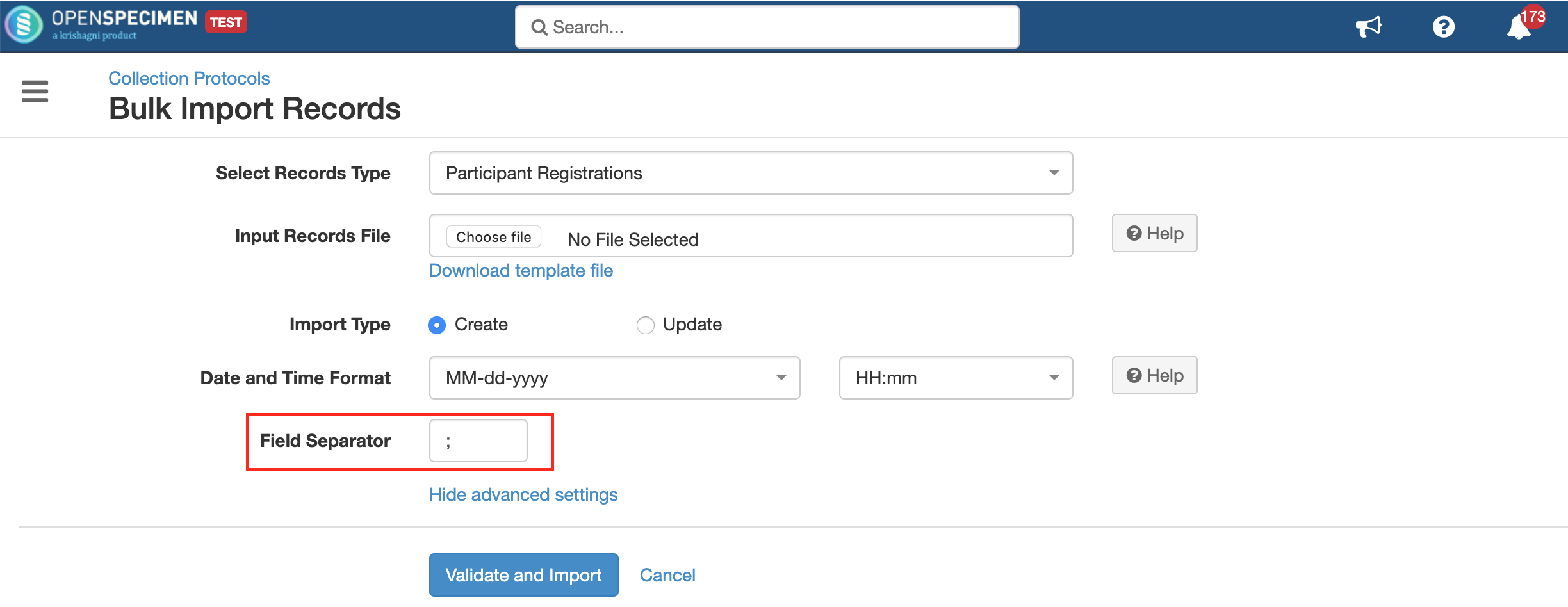

Under every data, there is an import option in the top-left corner of the screen.

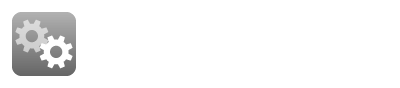

The data import file has to be in CSV (Comma Separated Values) format. From v6.1, OpenSpecimen supports semicolon-separated input files for bulk import. This is useful for files being imported from external devices(E.g. Liquid handling systems) which might be outputting data in different formats.

The data in the CSV file should be in specific template formats. The templates can be downloaded from the application under the import option for every data.

Yes, using the 'Master Specimens' template you can import participants, visits, and specimens in one go. For more details on import, refer to 'Master Template'

Email notifications are sent after the bulk import job is completed, failed, or aborted to the user who performed the bulk import. The email is also CCed to 'Administrator Email Address' set under Settings → Email.

Yes, the "Super Administrator" can view the import jobs of all the users. Please note that the user can bulk upload the data from two places.

The jobs will be visible to the Super Admin based on how the user uploaded the file. In other words, jobs uploaded at the global level won't be visible under specific CP and vice versa.

Users other than Super Admin can download or see only their imports. The system doesn’t know what is present in the file, so it restrict the access to only the users who have created the job or super admin.

The system validates the CSV for errors such as duplicate values in unique fields, incorrect date formats, incorrect dropdown values etc. Users can choose to validate the file before uploading any record.

All the bulk import jobs are validated first by the system. If there are errors in any of the records, none of the records are inserted or updated. You can download the report, correct the errors, and upload the same report file.

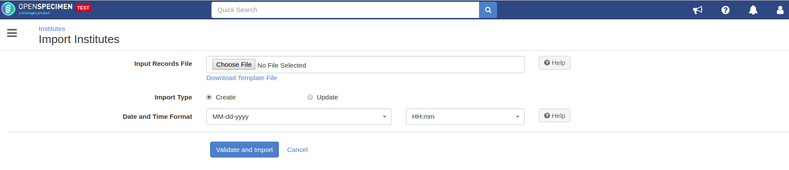

The validation does not happen in cases of large files. In such cases, data records are processed even if there are error records. By default, it is configured to 10,000, but it can be changed using admin settings:

To disable validation before importing, follow the steps:

Go to the home page, and click on the ‘Settings’ card.

Click on the ‘Common’ module and select the property ‘Pre-validate Records Limit’

Set ‘0’ for the ‘New Value’ field and click on ‘Update’

Note: When validation is disabled, the system will show errors for failed records but will upload the successful records. |

In bulk upload, if 100 records are uploaded out of which 60 failed and only 40 records processed successfully, the user has to filter out the failed records, rectify and upload them again for reprocessing. The 'Validate and Import' feature validates the complete file before upload.

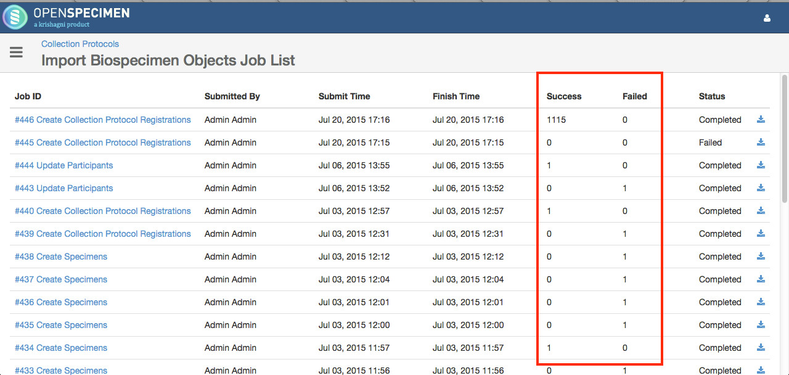

Once the CSV file is imported, there is a report generated under 'View Past Imports' in the import feature. The dashboard will show the status of every data import.

From the list view page, click on the 'Import' button → View Past Imports

If you are using bulk import with a CP, you can click on 'More' → View Past Imports

|

Watch the below video to learn about the common mistakes made during CSV import and how to avoid errors.

Reports are generated for every data upload. These are available under 'View Past Imports'.

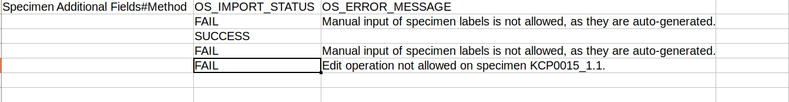

The report contains details about if a record upload was successful or failed. In case of failure, you can download the bulk import job file showing the import status and error showing the reason of failure.

The actual data in the application or in the data file needs to be corrected for the failed records, and they can be re-uploaded.

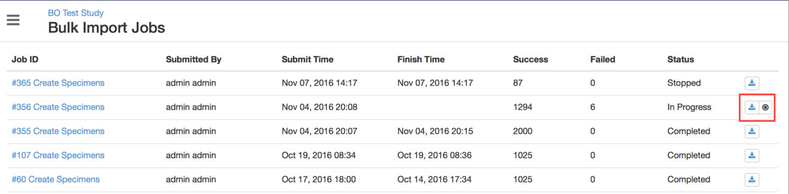

If you want to abort the bulk import job, click on the 'Abort' icon on the jobs page for the specific import job:

This will help if you have uploaded large files like 10k records and realize that there is a mistake in the records and would like to abort the bulk import job instead of waiting for the whole file to process.

If the file is imported without validation, the data records are processed until the job is aborted and those records are not rolled back. |

If you have a considerable number of data to import (say in 100s of K or millions), you can follow the below steps to improve the speed of data import: